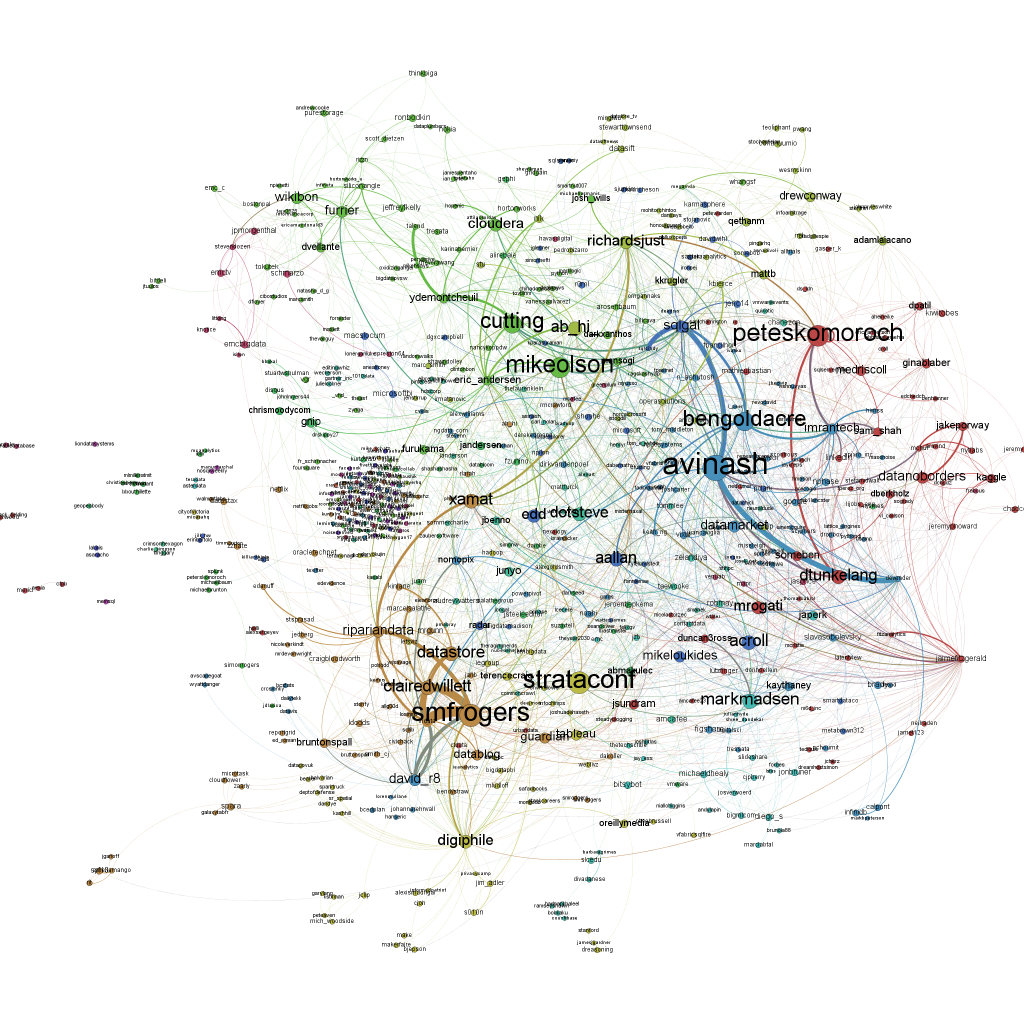

Here’s a network visualization of all tweets referring to the hashtag “#strataconf” (click to enlarge). The node size is representing the number of incoming links, i.e. the number of times this person has been mentioned in other people’s tweets:

This network map has been generated in three steps:

1) Data collection: I collected the twitter data with the open source application YourTwapperKeeper. This is the DIY version of the TwapperKeeper platform that had been very popular in the scientific community. Unfortunately after the acquisition by HootSuite it is no longer available to the general public, but John O’Brien has made the scripts available via his githup. I am running yTK on a Amazon EC2 instance. What it does is connecting to the Twitter Streaming API and fetching all tweets with “#strataconf” in realtime and additionally doing regular searches via the Search API to find tweets that had been overlooked by the Streaming API.

2) Data processing: What is so great about yTK: It offers different methods to fetch the tweets you collected. I am using the JSON API to get the tweets downloaded to my computer. This is done with a Python script. The script opens the JSON file and then scans the tweets for mentions or retweets with the following regular expressions I borrowed from Matthew Russell’s book Mining the Social Web:

rt_patterns = re.compile(r"(RT|via)((?:\b\W*@\w+)+)", re.IGNORECASE) at_pattern = re.compile(r"@(\w+)", re.IGNORECASE)

Then I am using the very convenient library igraph to write the results in the generic graphml file format that can be processed by various network analysis tools. Basically I am just using this snipped of code on all tweets I found to generate the list of nodes:

if not tweet['from_user'].lower() in nodes:

nodes.append(tweet['from_user'].lower())

… and edges:

for mention in at_pattern.findall(tweet['text']):

mentioned.append(mention.lower())

if not mention.lower() in nodes:

nodes.append(mention.lower())

edges.append((nodes.index(tweet['from_user'].lower()),nodes.index(mention.lower())))

The graph is generated with the following lines of code:

g = Graph(len(nodes), directed=True) g.vs["label"] = nodes g.add_edges(edges)

This is almost the whole code for processing the network data.

3) Visualization: The visualization of the graph is done with the wonderful cross-platform tool Gephi. To generate the graph above, I reduced the network to all nodes that have at least one other node referring to it. Then I sized the nodes according to their total number of degrees, that is how often they were mentioned in other people’s tweets or how often they were mentioning other users. The color is determined by the modularity clustering algorithm. Then I used the Force Atlas layout algorithm and voilà – here’s the network map.

Nice!